Blog

What is an identity graph? How it works and why it matters

What is an identity graph? How it works and why it matters

Danika Rockett

Content Marketing Manager

14 min read

December 16, 2025

Imagine the challenge of understanding customer actions when they are spread across various devices and channels. Without a clear way to connect these signals, your data is just noise.

An identity graph gives you the missing link to unify your customer data and truly understand who is behind every click, tap, and login. With the right approach, you turn scattered identifiers into a single, trusted view of every customer.

Main takeaways:

- An identity graph unifies fragmented identifiers like emails, device IDs, and logins into a single customer profile that works across channels and devices

- By solving the problem of identity resolution, identity graphs provide the structure needed to link disconnected data points and build a reliable view of the customer

- Modern data teams use identity graphs to power accurate attribution, seamless personalization, efficient operations, and privacy-compliant data management

- Building an identity graph involves collecting identifiers, linking them deterministically and probabilistically, mapping relationships, delivering resolved profiles, and maintaining updates over time

- With real-time, privacy-first identity graphs, organizations can improve analytics, strengthen compliance, and deliver consistent customer experiences across every touchpoint

What is an identity graph?

An identity graph is a data structure that connects multiple identifiers like email addresses, device IDs, and account logins to create a unified customer profile. It helps you recognize the same person across different devices, sessions, and channels where they interact with your business.

Identity graphs solve a fundamental problem in modern data management: fragmented customer identities. When users switch between mobile apps, websites, and offline channels, they create disconnected data points that are difficult to unify.

How does an identity graph work?

At its core, an identity graph organizes customer data around unique identifiers, either anonymous (like cookies or device IDs) or known (like emails or account numbers). These identifiers are stored as nodes, and their relationships are stored as edges, creating a network that shows how different signals connect to the same individual.

Once built, the graph allows you to:

- Link fragmented identifiers across devices, channels, and accounts

- Group relationships at different levels (user, account, household)

- Produce resolved profiles that can be consumed by downstream tools

- Evolve dynamically as new identifiers are collected or old ones change

In short, the graph provides a living structure that connects disparate identifiers into a single, trusted customer profile.

💡 Identity graph vs. knowledge graph: While identity graphs focus on connecting user identifiers, knowledge graphs organize broader information about entities and their relationships. Identity graphs are specialized for customer data unification.

Benefits and use cases of identity graphs

The identity resolution market is expanding fast, anticipated to grow from approximately $1.5 billion in 2023 to $5.8 billion by 2032. This rapid growth underscores how critical identity graphs are becoming: they're the underlying data structure that powers identity resolution at scale.

As companies invest more in connecting fragmented identifiers across devices and channels, identity graphs are emerging as the core infrastructure for delivering accurate attribution, personalization, and compliance in customer data strategies.

Accurate attribution across touchpoints

Identity graphs connect user actions across multiple devices and channels. This helps you attribute conversions correctly, even when customers switch between mobile and desktop or use multiple browsers.

Example: A user researches on their phone, opens an email on their tablet, and purchases on their laptop. With an identity graph, you can track this journey as a single customer path rather than three disconnected interactions.

Consistent personalization

With a unified identity, you deliver relevant experiences regardless of where customers interact with your business. This creates seamless customer journeys that build trust and engagement. Graph identity technology makes it possible to maintain context as customers move between different parts of your business.

- Product recommendations stay consistent across devices

- Content preferences follow users between channels

- Service history remains accessible across touchpoints

Efficient data operations

Identity graphs consolidate duplicate records and resolve inconsistencies. This improves data quality and reduces engineering overhead in several ways:

- Deduplication: Eliminates multiple instances of the same customer

- Data hygiene: Resolves conflicting information across sources

- Operational efficiency: Simplifies downstream data pipelines

By maintaining a single reference point for each customer, identity graphing reduces data management complexity.

Privacy and compliance management

A well-designed identity graph enables consent-aware, privacy-compliant profiling by binding user consent to each identifier and ensuring automated enforcement across sources and usage contexts.

Identity graphs help enforce privacy controls and consent management at the profile level. This capability is increasingly critical as regulations like GDPR and CCPA require careful handling of personal data.

- Track consent status across touchpoints

- Implement data deletion or access requests efficiently

- Apply consistent privacy policies to all customer data

Leading identity graph companies build privacy and governance directly into their architecture rather than adding it as an afterthought, often employing behavioral analytics to proactively detect anomalies.

Cross-functional value delivery

Identity graphs provide a unified data foundation that breaks down silos between marketing, product, analytics, and customer support teams, enabling coordinated customer experiences across touchpoints.

Each department can operate from the same "single customer view" while tailoring use cases to their specific needs, eliminating data inconsistencies that lead to poor customer experiences and inefficient operations.

Support agents access complete interaction histories across channels for contextual service delivery; product teams analyze cross-platform usage patterns to improve features; marketers create segment-specific journeys based on holistic behavior; and data scientists build more accurate churn prediction and lifetime value models using the comprehensive profile data.

Unlock insights with behavioral analytics

Learn how user actions shape identity graphs, unify profiles, and power better personalization.

The problem of identity resolution

Modern data isn't just about tracking events over time—it's about connecting those events back to the real people behind them. Traditional databases and warehouses are excellent for time-based queries (like "How many signups did we get this week?"), but they struggle when the question shifts to "Who is this customer across devices, channels, and sessions?"

That's the core challenge of identity resolution: compressing fragmented data points into a single, reliable view of each customer. A person might appear as an anonymous browser cookie, a mobile app login, and an email address—all disconnected unless you have the means to tie them together.

Solving this requires a data structure that can handle:

- Massive volumes of connections (person → device → account → event).

- Fast indexing and lookup to match new identifiers against existing profiles in real time.

- Scalability to support millions of customers and billions of events without bottlenecks.

This is why identity graphs are built on graph-based models rather than flat tables. Graph databases can represent relationships between identifiers as nodes and edges, making it possible to unify multiple IDs into one connected profile. Without this approach, businesses face duplicate records, inconsistent attribution, and incomplete personalization—problems that only intensify at scale.

How to create an identity graph

Building an identity graph is not just a technical exercise, but an ongoing process of collecting identifiers, linking them accurately, and ensuring the system evolves as customers and regulations change. Here's a step-by-step guide:

Step 1: Collect identifiers

The foundation of any identity graph is the quality and breadth of the identifiers you capture. These come in two main types:

- Deterministic identifiers: Data points that uniquely identify a customer, such as email addresses, phone numbers, account logins, or loyalty card IDs.

- Probabilistic identifiers: Signals that suggest a likely connection, such as IP addresses, browser fingerprints, or device IDs.

Best practices:

- Collect identifiers from every possible touchpoint—websites, mobile apps, CRM, POS systems, call centers, and even offline events.

- Standardize formats early (e.g., phone numbers with country codes, consistent email casing) to prevent mismatches later.

- Tag identifiers with metadata such as timestamp, source system, and consent status to improve reliability and compliance.

Step 2: Link identifiers

Once identifiers are collected, the goal is to determine which belong to the same customer. This involves two approaches:

- Deterministic linking: Creates exact matches. For example, if a user logs in with the same email across two devices, those sessions are confidently tied together.

- Probabilistic linking: Uses statistical models to infer likely connections. For instance, multiple sessions from the same IP and device fingerprint within a short timeframe may reasonably belong to one individual.

Best practices:

- Maintain confidence scores for each link so you can distinguish between high-certainty (deterministic) and lower-certainty (probabilistic) matches.

- Run algorithms like connected components that propagate minimum IDs across nodes until stable groupings form. This ensures all linked identifiers share a virtual ID.

- Reevaluate probabilistic matches over time as more deterministic data arrives.

Step 3: Build entity relationships

Identifiers alone aren't enough; they need to be organized into meaningful structures. A graph database model allows you to connect identifiers as nodes and relationships as edges, creating a web of associations.

Examples of relationships:

- User ↔ Device: One customer using multiple devices

- Account ↔ User: A single account accessed by several users

- Household ↔ Users: Families or shared addresses

- Session ↔ User: Linking anonymous browsing sessions back to a known individual

Best practices:

- Define entity models based on your business needs — some organizations focus on households, while others prioritize individual user profiles.

- Validate relationships continuously to ensure they reflect real-world behavior.

- Use visualization tools to help teams see how identifiers connect and where gaps exist.

Step 4: Deliver resolved identities

Once identifiers and relationships are unified, the graph produces resolved profiles—a single view of the customer. These profiles serve as the foundation for downstream applications, including:

- Analytics and attribution

- Personalization engines

- Marketing automation

- Compliance

- Customer support systems

The customer identity graph becomes a central source of truth that different teams can reference for consistent identity resolution.

Best practices:

- Assign virtual IDs to each connected component of the graph. All identifiers within that component inherit the same ID, ensuring consistency across systems.

- Store resolved profiles centrally so they can be accessed by different tools without duplicating data.

Step 5: Monitor and update the graph

An identity graph changes as customers adopt new devices, update contact details, or revoke consent. Continuous maintenance keeps the graph accurate and compliant.

Best practices:

- Use incremental updates instead of reprocessing the entire graph. Focus only on new nodes, edges, and the connected components they touch. This dramatically improves efficiency (profiling tests show 5–6x faster performance compared to full rebuilds).

- Incorporate version numbers or timestamps to track when edges are created. This allows you to selectively update affected parts of the graph without disrupting the rest.

- Continuously enforce privacy and compliance rules, ensuring that consent, deletion requests, and masking propagate across all linked identifiers.

Build privacy-first identity graphs with RudderStack

Connect fragmented identities in real time while staying compliant and in control of your data.

A real-life scenario: identity graph

Imagine a user's journey across an eCommerce platform:

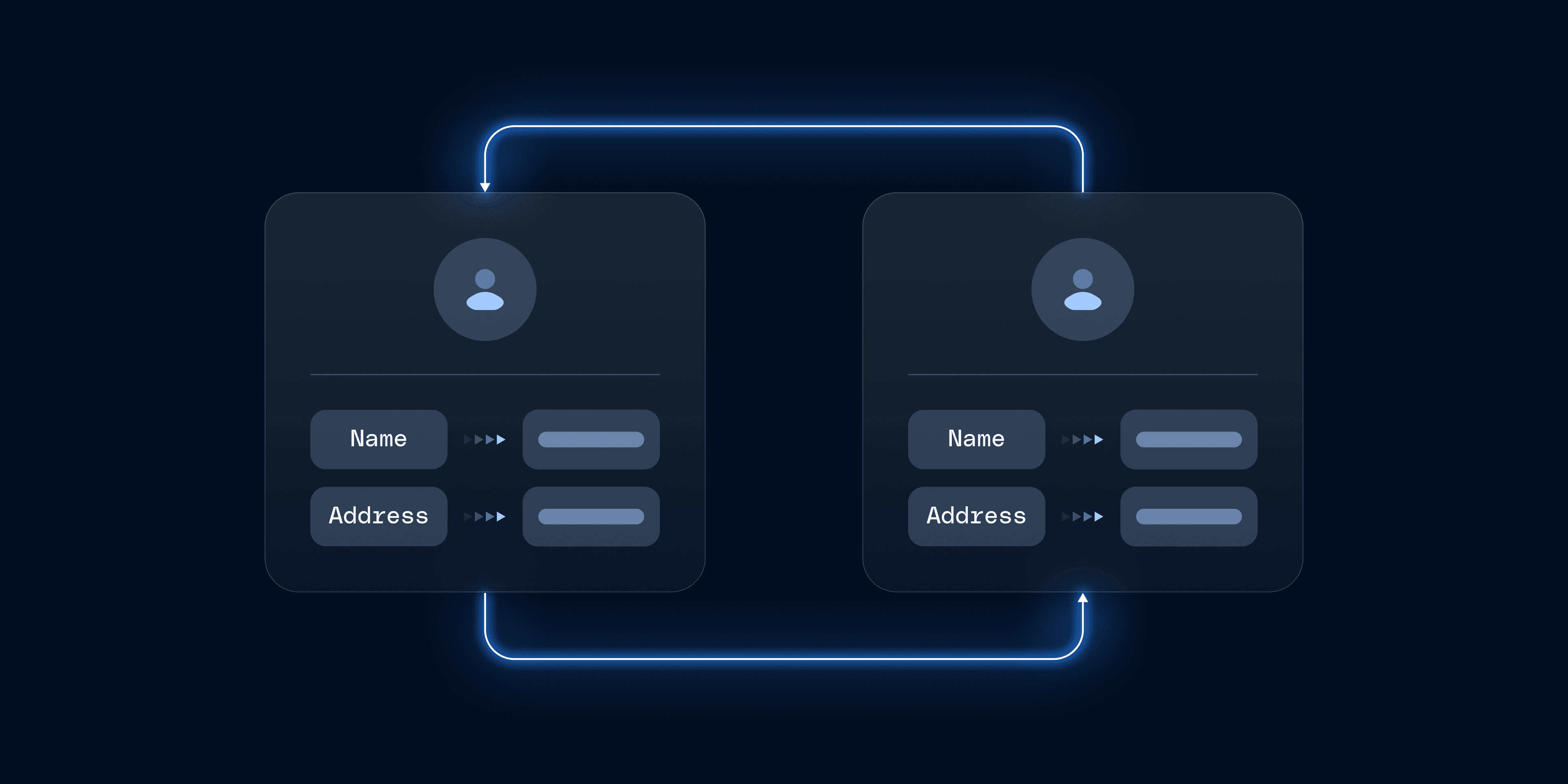

- Step 1: Browsing anonymously: The user visits the website on a laptop, browses products, but doesn't purchase. Since no identifier is provided, RudderStack assigns an anonymous ID (A-Web) and stores it in a cookie. All web events are tied to A-Web and sent to the data warehouse.

- Step 2: Mobile app login: The same user installs the mobile app. RudderStack assigns another anonymous ID (A-Mob). When the user logs in with a phone number (U-Phone), the eCommerce application explicitly passes this identifier using the identify() call. RudderStack then associates A-Mob with U-Phone, connecting mobile activity to the same user entity.

- Step 3: Check out with email: The user later returns to the website and completes a purchase, providing both an email (U-Email) and the same phone number (U-Phone). Through another identify() call, RudderStack links A-Web with U-Email and U-Phone, consolidating identifiers across sessions and devices.

At this point, all events, anonymous browsing, mobile app activity, and the final purchase can be connected to a single virtual user ID. This is achieved by mapping the various identifiers (A-Web, A-Mob, U-Phone, U-Email) to one profile in the warehouse. Analysts can JOIN event tables with the ID-mapping table to unify behavior data.

The evolving identity graph

Identity graphs are dynamic. Suppose the same customer visits the site from a third device, such as an office desktop. RudderStack assigns a new anonymous ID (A-Web2). At first, this ID isn't tied to the existing profile. But once the user logs in with their email, RudderStack associates A-Web2 with U-Email, extending the identity graph.

Why this matters

In this scenario, the identity graph enables the platform to:

- Unify fragmented data across devices, channels, and identifiers.

- Resolve anonymous events once identifiers are provided.

- Create a single, reliable profile that supports analytics, personalization, and marketing use cases.

Instead of siloed records tied to individual IDs, the identity graph stitches them into a cohesive picture of one customer. This approach ensures that whether a user browses anonymously, logs in on mobile, or checks out on desktop, every interaction contributes to a unified, actionable profile.

Ready to power accurate identity resolution at scale?

See how RudderStack enables real-time identity graphs that drive personalization, analytics, and governance.

Enable identity resolution with RudderStack Profiles

Identity graphs transform fragmented data into actionable, privacy-safe customer profiles. They help you improve attribution, personalization, analytics, and compliance across your organization.

RudderStack Profiles is designed for engineers and data teams who want to build and manage identity graphs within their own cloud infrastructure. With built-in privacy controls and flexible integration options, you maintain full control of your customer data.

The identity graphic capabilities in RudderStack Profiles help you connect identifiers across sources while enforcing governance rules and privacy requirements. This creates a foundation for accurate analytics and personalization without compromising data security.

Request a demo to see how RudderStack Profiles can power your identity resolution strategy.

FAQs about identity graphs

What is an identity graph database?

An identity graph database is a specialized store for relationships between customer identifiers, like emails, device IDs, and logins, so you can resolve identities quickly at scale. It is optimized for linking and querying connections, not just storing rows, which makes it useful for real-time lookups and profile stitching.

How do identity graphs improve data quality?

Identity graphs improve data quality by unifying identifiers into a single profile, reducing duplicates, and preventing conflicting records across tools. When events and traits map back to a resolved ID, analytics and activation become more consistent, and data teams spend less time reconciling mismatched customer records.

Which industries benefit most from identity graphs?

Retail, financial services, media, healthcare, and technology companies often benefit most because customers move across many devices, sessions, and channels. Identity graphs help these teams connect journeys end to end, improve attribution and personalization, and maintain stronger governance as data volume and complexity increase.

Who is marketing the identity graphs?

Identity graphs are often managed by vendors that sell access to large third-party graphs, while many organizations build their own from first-party identifiers. Some teams combine both approaches, but first-party graphs typically provide more control, clearer governance, and better alignment with privacy requirements.

How do identity graphs handle privacy compliance?

Identity graphs support privacy compliance by tracking consent per identifier, enforcing suppression rules, and making deletion or access requests propagate across linked IDs. When privacy rules attach to the resolved profile, teams can apply consistent controls across destinations instead of relying on one-off fixes in every tool.

Published:

December 16, 2025

Get started today

Start driving better business outcomes with your customer data in less than a week

Book a demo

Explore use cases with an expert and see RudderStack in action.

Implement RudderStack

Start collecting and enabling real-time customer data everywhere it's needed.

Drive better outcomes

Supercharge your analytics, product, growth, and AI teams.